What is Project Origin?

One of the major problems arising out of today’s digital world is that of disinformation. This has been a growing issue over the past decade, particularly when carried out by political actors and foreign governments attempting to shape events to their advantage. With the isolation and remote work that COVID-19 brought last year, the dissemination of false information has become even more widespread, to the point that we could be on the verge of an “infocalypse,” as deepfake researcher Nina Schick puts it. Much of the general public has either lost trust in information sources or inadvertently contributed to spreading false news themselves. And when intentional disinformation campaigns do work, they can have enormous consequences. Project Origin is an answer to this concern.

The Problem of Media Manipulation

Disinformation efforts aim to delegitimize public leaders, affect election outcomes, or polarize societies (among other agendas). A report on 76 foreign influence efforts between 2013 and 2019 showed 26% of total world campaigns targeting the United States and 74% of them distorting objectively verifiable facts. Increasingly, this disinformation involves video content that is manipulated in ways that people can’t detect. The FBI recently warned that deepfakes are the next big cyber threat, and indeed this is an issue that needs to be addressed urgently. While there’s no way to stop video manipulation from happening, another idea is to create a “chain of trust” from the creator to the content consumer, and alert the viewer if that chain has been broken.

The Origins of the Project

At a summit meeting last year, the Trusted News Initiative (TNI) announced Project Origin as a new coalition to detect and tag manipulated media. It’s a project led by the BBC, CBC/Radio-Canada, Microsoft, and The New York Times. The goal is to create a widespread system of video authentication which can identify material that’s been changed by a source other than its creator. Such media can then be tagged with a notice to the viewer that it has been manipulated. Microsoft’s involvement in the project has led to technology that can function for this purpose.

The Chain of Authentication

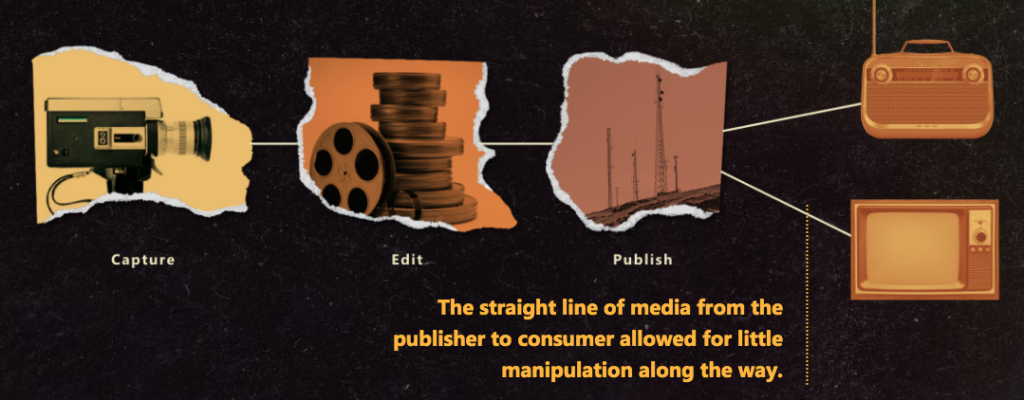

In the early days of traditional media, those who published content broadcast it directly to consumers, such as through television or radio. This left little room for tampering with it in between. Today, the long and weblike route that content travels allows for many—even exponential—opportunities for manipulation.

Because of this uncontained distribution, Project Origin aims to create a verifiable chain from the the publisher to the consumer. There are three basic parts to this system of verification:

- Authenticate the video source. Publishers can finish their work with a certification of original authenticity in a tamper-proof ledger—a sort of digital fingerprint or watermark. This verifies where the video came from, or its provenance.

- Identify modifications. If the video is manipulated by another party after publication, this certification is broken and the watermark is no longer valid.

- Alert viewers when the video has been shown to be modified. With a warning alert, viewers can take a more informed approach, understanding that the video has been changed from its originally published version.

Authentication of Media via Provenance (AMP)

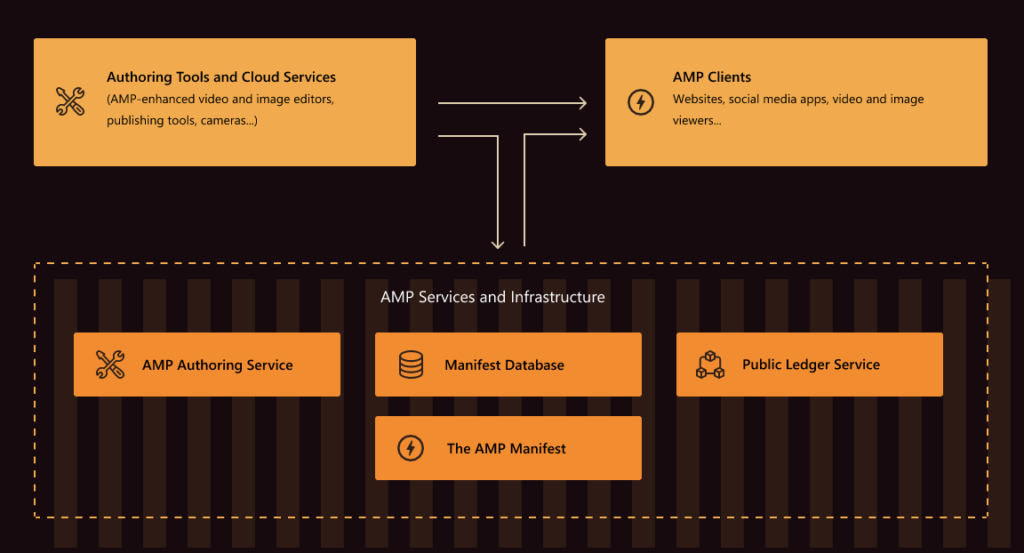

The ability for publishers to certify the authenticity of their original work is called Authentication of Media via Provenance (AMP). Publishers can put a sort of digital signature on a video, which Microsoft calls a manifest. This includes both who the publisher is as well as any chosen metadata. The manifest can be embedded into the the video itself, and it can also be uploaded to databases and registered on public ledger/blockchain services. There are a couple different ways to create the manifest: the publisher can use AMP tools to generate it, or its creation can be integrated into a cloud-based media distribution workflow. For more details and helpful graphics on how AMP works, see Microsoft’s AMP breakdown.

Project Origin and Beyond

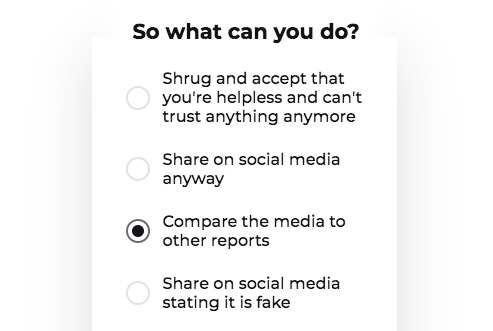

The hope of those building Project Origin is that this practice will become a widely used norm, with the creation of a formal industry standard similar to the Alliance for Open Media. Efforts to put this together are already underway: Adobe, Intel, and the BBC are among those who are founding the Coalition for Content Provenance and Authenticity. Of course, even this is only one chip at the big issue of disinformation online. What Project Origin does is no guarantee of the trustworthiness of any video—only whether it’s been tampered with since publication. It’s still up to viewers to consider the reliability of content and its source, even if it is in its original form.

To learn more instead of shrugging helplessly, check out these resources and pathways to media literacy and authentication:

- Video Authenticator, Reality Defender, and Sensity: these software tools analyze media and provide a probability that it is fake or manipulated.

- Spot the Deepfake: take the interactive quiz to test your deepfake detection skills.

- Newsguard and other fact-checking websites: get a trust rating on how reliable a news article is.

- The Paris Call for trust and security in cyberspace: see 9 principles of information protection to strive for—and if you like, register your organization’s support.