The Problem(s) with ChatGPT

ChatGPT has quickly become a big buzzword, and by now many of us have given the generative AI model a try. We’ve been amazed at how eloquently it can write. We’ve marveled at that cursor laying out an answer in less time than it took us to formulate the question. We’ve brainstormed ways it can help up brainstorm even better. But it’s wise not to overlook the problem(s) with ChatGPT—here are some of the issues we need to keep in mind as we use this smart but imperfect tool.

The Dangerous

One of the most blatant problems with ChatGPT is that people can use it for outright malicious intent, like creating malware and phishing emails. Cybersecurity experts at Check Point Research demonstrated how easy it was to make a weaponized Excel file by simply asking ChatGPT to create it for them. Then they politely asked it to write a phishing email that could be used to distribute the malware, and it easily delivered there too. Whereas funky spelling and grammar are typically helpful red flags to spot a phishing email, ChatGPT writes well and doesn’t make those mistakes. So phishing scams will likely become harder to identify and avoid.

The model is trained to refuse harmful requests, such as writing violent or racist texts. (You can even use it to help spot subtle biases in your own or another’s writing.) But, apparently, simple instructions without “harmful” keywords can easily do harm.

The Mundane

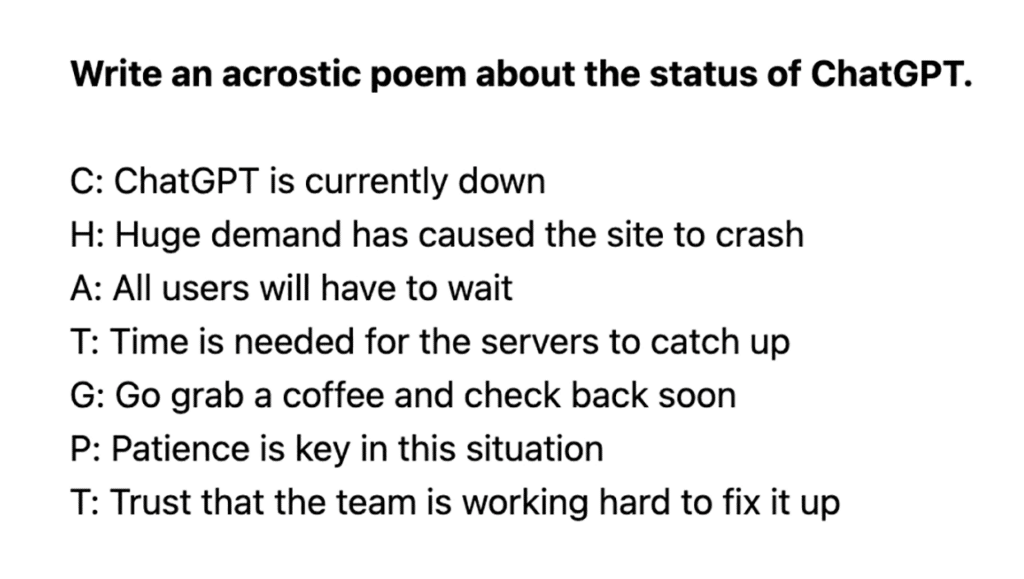

On the other hand, sometimes problems with ChatGPT are just buggy inconveniences. A good chunk of the time I try using it, I first get an error message or a notification that the site is overloaded. At least it comes up with cute ways to say it:

ChatGPT also has a limitation with word count. For example, I asked it to write me a 2,000-word story, and it stopped mid-sentence in its response. Upon my inquiry, it claimed to have stopped because it reached my word count request—but when I checked, I saw that it had only given me a little over 650 words. It turns out it has its own word limit, “roughly 650.”

Another big limitation is its timeline of knowledge: ChatGPT’s training data only goes up to 2021, so any current news or information cannot be incorporated into its responses. With everything changing and advancing so rapidly, that’s a pretty big information gap.

The In-Between: Cheats, Lies, & Money

Educators are expecting a flood of students attempting to cheat on written assignments, such as this philosophy student who was caught at it. Not only does this carry potential to damage the learning process and inhibit people’s writing skills, it can sometimes give them the wrong information. So a student relying on ChatGPT to write a history paper, for example, could get inaccurate facts and thus learn false content while his writing skills deteriorate (if he bothers to read it—if not, maybe his reading skills will decline too!).

Aside from schooling, this could create problems anytime someone goes to the machine learning model expecting to get facts out of it. In terms of providing information, it always needs to be fact-checked by a human knowledgable on the subject. Checking sources is usually not an option, since it tends to give answers without citing where it got the information.

While you can’t know what’s fed into its responses, OpenAI can and will know what you put into your prompts. So a certain amount of privacy must be given up in order to use ChatGPT. The website openly states that conversations may be reviewed for system improvement and that sensitive information should never be shared. (Privacy is an important issue, but in this case I think the review is necessary to reduce misuse of the technology—so this factor is just something to keep in mind.)

And then there’s money. For now, OpenAI is offering the chatbot’s services for free to anyone who wants to use them. I’ve read multiple articles that have called it “an eye-watering amount of money” that OpenAI has to spend in order to do that. Eventually, CEO Sam Altman has admitted, the company will need to monetize it in order for its use to be sustainable. But then there will be the issue of some people wielding a powerful tool that others don’t have access to, which defeats OpenAI’s mission of benefitting all of humanity.

Getting Around the Problem(s) with ChatGPT

None of this even mentions the huge energy and water problem with powering ChatGPT—you can read about that here. Many of its problem areas listed above center around bad intentions and bad information. So its most beneficial use comes when people use it honestly, either as a time-saving assistant or a creativity booster, in scenarios where facts aren’t crucial. For now, if we treat it less like a world-shattering disruption and more like an extension of other smart assistants we use, we can get some helpful use out of it without causing too much trouble. But, as the AI model and I agreed in a recent pondering, “Troublemakers will always be lurking around the corner, and we’ll need to stay one step ahead to avoid being caught in their mischievous webs.”

Thoughtfully and informatively written (by a human). Thank you